Your music sounds generic not because your plugins are poor, but because you are trapped in the default workflows they were designed to encourage.

- Preset-scrolling and trend-chasing lead to predictable, homogenous sounds that lack a personal signature.

- True sonic identity emerges from deconstructing sounds to their basic elements and applying compositional logic to timbre itself.

Recommendation: Stop searching for the perfect preset and start treating sound design as a core compositional act by building textures from the ground up.

You’ve invested in the latest software synthesizers, your plugin folder is bursting with critically acclaimed emulations, and you follow every tutorial. Yet, a nagging frustration persists: your electronic elements sound sterile, generic, and strangely dated. They lack the distinct sonic signature that defines the producers you admire. You hear your factory pad sound in a dozen other tracks, and your carefully crafted chord progressions feel lifeless, failing to translate the complexity you envisioned.

The common advice is a spiral of consumption: buy a new sample pack, get that “warm” vintage emulation, or find a better preset. But for the serious composer or producer, this rarely solves the underlying issue. The problem isn’t a deficiency in your tools; it’s an over-reliance on the frictionless, predictable workflows they promote. These systems are optimized for speed and accessibility, inadvertently guiding millions of users down the same creative paths, resulting in a landscape of sonic homogeneity.

This article argues for a radical shift in mindset. The path to a unique sound lies not in acquiring more, but in intentionally deconstructing what you already have. It requires treating sound design not as a preliminary step, but as a compositional practice as vital as harmony or rhythm. We will explore why default settings are an aesthetic trap, how to build a signature sound from a single oscillator, and why the real difference between hardware and software is cognitive, not auditory. Ultimately, you will learn to apply a composer’s logic to timbre itself, ensuring your electronic textures finally sound like you.

This guide provides a structured approach to developing a unique sonic identity. We’ll deconstruct the problem of homogeneity, offer practical synthesis techniques, and reframe technical challenges as creative opportunities.

Summary: A Composer’s Path to Escaping Sonic Homogeneity

- Why Does That Factory Pad Appear in 500 Other Tracks This Year?

- How to Build a Signature Pad Sound from a Basic Oscillator in 30 Minutes?

- Vintage Hardware vs Plugin Emulation: Can Listeners Actually Hear the Difference in Blind Tests?

- The Headroom Mistake That Makes 70% of Bedroom Producer Mixes Sound Congested

- When to Introduce Electronics into an Acoustic Ensemble Piece: From the Start or as Development?

- Why Do Your ii-V-I Progressions Sound Generic Despite Perfect Voice Leading?

- Why Does Every Midjourney User’s Portfolio Look Identical After 6 Months?

- Why Do Your AI-Assisted Artworks Look Like Everyone Else’s AI Art?

Why Does That Factory Pad Appear in 500 Other Tracks This Year?

The phenomenon of hearing the same synth patch across countless productions isn’t just your imagination; it’s a direct consequence of a production ecosystem built on micro-trends and accessible sound libraries. Platforms like Splice have democratized access to high-quality sounds, but they’ve also created powerful feedback loops of sonic homogeneity. When a specific sound or genre gains traction, its constituent elements become massively popular. For instance, a recent report highlighted a staggering 778% year-over-year increase in Afro house downloads on Splice, indicating a vast number of producers are drawing from the same sonic well.

This trend-driven consumption turns presets and popular samples into a creative shortcut, but one with a significant cost. Instead of building a sound to serve a specific compositional need, the producer adapts their composition to fit a pre-made sound. The result is music that feels contemporary for a moment but quickly becomes dated as the trend cycle moves on. It’s the musical equivalent of fast fashion, where individuality is sacrificed for fleeting relevance.

The core issue is a passive workflow. Scrolling through presets until one “fits” is an act of curation, not creation. It outsources the most personal aspect of electronic music—timbre—to the software designer. As one music industry analyst notes, this process is a double-edged sword. According to Mark Mulligan, Managing Director at MIDiA Research, “In a music economy increasingly shaped by micro-trends, sample usage offers one of the clearest signals of what’s coming next.” While this is valuable for analysis, for a composer it’s a warning: following the signal too closely guarantees you’ll sound like everyone else.

How to Build a Signature Pad Sound from a Basic Oscillator in 30 Minutes?

The most powerful antidote to preset fatigue is to embrace intentional friction. Instead of starting with a complex, fully-formed sound, begin with the most fundamental building block: a single oscillator generating a basic waveform (like a sawtooth or square wave). This forces you to make deliberate, creative decisions at every stage of the sound’s development. It shifts your role from a consumer of sounds to a true architect of timbre. The process isn’t about technical mastery overnight; it’s about re-engaging the composer’s ear.

A signature pad can be developed methodically. First, focus on the oscillator section. Try detuning a second oscillator slightly to introduce a rich, chorusing movement. Next, move to the filter (VCF). Instead of a static filter setting, assign a slow-moving LFO or a long-attack envelope to the cutoff frequency. This gives the sound its own internal life and emotional arc. Finally, shape the amplitude (VCA) with an envelope that has a slow attack and a long release, creating the classic “pad” contour. The key is that every choice—from the LFO speed to the amount of filter resonance—is yours, directly shaping the sound’s character.

This process of building from scratch isn’t just a technical exercise; it’s a compositional one. You’re not just “making a pad,” you’re designing a specific textural element to fulfill a musical function. It’s this intentionality that separates a signature sound from a generic preset.

Action Plan: Auditing a Preset to Make It Your Own

- Deconstruction: Load a factory preset and immediately disable all effects (reverb, delay, chorus). Isolate the core sound engine. What are its raw oscillators and filter settings?

- Envelope Reshaping: Radically alter the VCA and VCF envelopes. Turn a short pluck into a long pad, or a swelling sound into a percussive stab. How does this change its musical function?

- Modulation Re-routing: Identify the primary modulation sources (LFOs, envelopes). Re-route them to different destinations. If an LFO is modulating pitch, assign it to filter cutoff or panning instead.

- Filter Character Test: Sweep the filter cutoff and resonance manually across their full range. Identify the “sweet spots” and moments where the sound breaks or becomes interesting. Automate this.

- Effects as an Instrument: Re-introduce effects one by one, but treat them as part of the synthesis. Use extreme reverb settings or granular delay to transform the source into something unrecognizable.

Vintage Hardware vs Plugin Emulation: Can Listeners Actually Hear the Difference in Blind Tests?

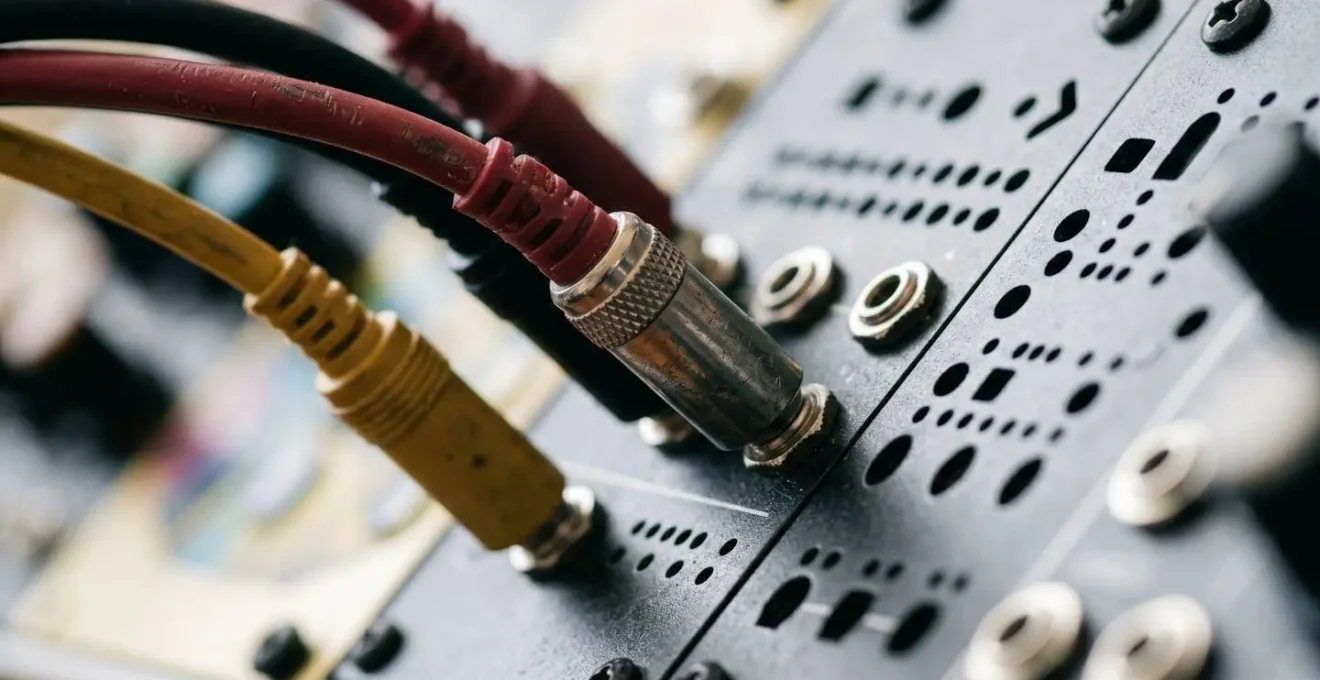

The debate over analog hardware versus digital plugins is one of electronic music’s most enduring—and misleading—arguments. Many producers, frustrated with “cold” digital sounds, chase the perceived warmth and mojo of vintage gear, believing the tool itself is the source of character. While hardware has a unique tactile interface, the sonic gap has narrowed to a point of near-irrelevance for the listener. As one engineer on a popular audio forum bluntly put it, the aversion is often psychological: “I’d be willing to bet my house that your aversion is to ‘digital’ but NOT what it is capable of. Far, far too many great products have closed the gap.”

The real difference is not in the audio output, but in the cognitive workflow. A physical synthesizer with a one-knob-per-function layout encourages experimentation and happy accidents. In contrast, many software synths hide their parameters behind tabs and menus, promoting a more linear, less exploratory process. This isn’t a failure of digital audio, but a failure of interface design.

A technical report from The Open University on synthesizer interfaces confirms this. The study found that modern synth UIs often create significant cognitive barriers for musicians. They require users to understand the system’s specific architecture and terminology, rather than allowing for intuitive, musically-driven interaction. This procedural design approach forces a left-brain, analytical mindset, stifling the right-brain creativity that leads to unique sounds. The problem isn’t that the plugin sounds bad; it’s that its design makes it difficult to discover what it’s truly capable of. Escaping the “digital sound” is not about buying hardware; it’s about finding software with interfaces that invite play, or developing the discipline to dig past the clumsy UI of powerful tools.

The Headroom Mistake That Makes 70% of Bedroom Producer Mixes Sound Congested

One of the most common hallmarks of a “dated” or “amateur” electronic mix is a lack of dynamic range. In an effort to make tracks sound loud and competitive, many producers push every element to its maximum level, eliminating the crucial space known as headroom. This results in a mix that feels congested, fatiguing, and devoid of impact. Individual sounds, no matter how well-designed, lose their definition and are smothered in a dense wall of sound. The issue is exacerbated by the ongoing “loudness wars,” where the perceived-loudness bar is constantly being raised.

Data from mastering platforms shows just how compressed modern music has become. According to a 2024 analysis, the average for commercial releases is now a startlingly loud -8.3 LUFS integrated. While this level may be appropriate for certain club-focused genres, adopting it as a universal target during production is a recipe for a lifeless mix. Leaving headroom isn’t just a technical task for the mastering engineer; it’s a compositional choice that preserves the power and clarity of your arrangement.

Think of headroom as the negative space in a painting. It’s the silence between notes that gives them meaning and the space around a sound that allows it to breathe. By aiming for lower average levels during your mixdown (e.g., leaving 6dB of headroom on your master bus), you create the space needed for transient peaks—like a snare drum or a synth pluck—to cut through with punch and authority. This dynamic contrast is what makes a mix feel alive, professional, and ultimately, timeless. A quiet, dynamic mix can always be made louder in mastering, but a congested mix that’s been clipped and limited at every stage has lost its internal dynamics forever.

When to Introduce Electronics into an Acoustic Ensemble Piece: From the Start or as Development?

For composers working at the intersection of electronic and acoustic worlds, the question of integration is paramount. Should the electronics be present from the outset, establishing a hybrid world, or should they emerge later as a developmental surprise? There is no single correct answer, as the choice is fundamentally an aesthetic and structural decision, not a technical one. The most successful integrations treat the electronic and acoustic elements as equal partners in a unified narrative, rather than as separate entities.

Introducing electronics from the start creates a sound world where the synthetic and the organic are intrinsically linked. This approach allows the composer to explore the seamless blending and morphing of timbres—a flute note might subtly transform into a filtered sine wave, or a string section could be shadowed by a granular texture. Here, the electronics are not an “effect” but a core part of the instrumental palette. This method is often most effective when the electronic part is derived from or is in direct dialogue with the acoustic material.

Alternatively, holding back the electronics for a later section can create a powerful moment of structural transformation. The piece can begin in a familiar acoustic space, establishing musical themes and ideas, only to have that world fractured or expanded by the sudden entry of a synth pad or a burst of digital noise. This can be incredibly dramatic, serving as a formal marker or signifying a shift in the piece’s emotional core. The risk, however, is that the electronics may feel like an afterthought if their material is not thematically justified by what came before. As Splice’s CEO Kakul Srivastava observed in a recent report, “Music has entered an era where the biggest trends are personal. Our data shows creators pulling inspiration from everywhere at once, blending global sounds and local scenes to create music that feels both deeply human and culturally expansive.” This philosophy of blending should be the guide; the best choice is the one that serves the piece’s unique expressive journey.

Why Do Your ii-V-I Progressions Sound Generic Despite Perfect Voice Leading?

You’ve mastered the theory. Your ii-V-I progressions use beautiful, smooth voice leading, with each note moving by the smallest possible interval. Yet, when you play them on your go-to piano or synth patch, they sound like a textbook exercise—correct, but emotionally vacant. This is a classic case where harmonic sophistication is let down by timbral simplicity. The solution lies in extending the logic of voice leading from the realm of pitch to the realm of timbre itself. This is the concept of Timbral Voice Leading.

Timbral Voice Leading treats the sonic character of each note in a chord as a voice that can move and evolve. Instead of a static sound playing all the notes, imagine each note in your ii chord having a slightly different filter cutoff or waveform. As you move to the V chord, these timbral characteristics shift logically. Perhaps the filter on the root note opens up while the filter on the third closes slightly, creating a subtle, internal contrapuntal movement within the sound itself. As you resolve to the I chord, the timbres might coalesce into a purer, more stable state.

This approach transforms a static harmonic progression into a dynamic, living texture. The timbral evolution adds another layer of narrative and emotional direction that harmony alone cannot provide. A simple way to begin exploring this is with a multi-timbral synthesizer or by using separate synth instances for each voice in your chord. Assign a basic patch to each, then create subtle variations in filter resonance, envelope attack, or LFO speed for each voice. The goal is not to create a chaotic mess, but a coherent evolution of texture that mirrors and enhances the underlying harmonic movement. By thinking this way, your ii-V-Is will no longer be just a set of chords; they will become unique sonic events.

Why Does Every Midjourney User’s Portfolio Look Identical After 6 Months?

The challenge of sonic homogeneity in music production has a startlingly clear parallel in the world of AI art. Tools like Midjourney offer immense power, but they also possess a strong “house style”—a default aesthetic that is easy to achieve and difficult to escape. New users are often amazed by the initial results, but after a few months of use, a portfolio can begin to look strikingly similar to thousands of others. This is because the user is operating within the tool’s path of least resistance, guided by popular prompting techniques and the model’s inherent biases.

This problem is amplified by the sheer volume of creative content being produced daily. With more than 100,000 new recordings uploaded daily to streaming platforms, standing out is not just an artistic goal; it’s a practical necessity. Whether in music or visual art, relying on a tool’s default output is a surefire way to get lost in the noise. The most celebrated AI artists are not those who are best at writing basic prompts, but those who develop complex workflows that involve multi-prompting, image blending, and significant post-processing in other software. They are intentionally breaking the tool’s default behavior.

The solution, as in music, is to reintroduce a human, often “imperfect,” element into the workflow. It’s about using the AI not as a final image generator, but as a source of raw material—a texture, a lighting idea, a compositional sketch—that is then manipulated, deconstructed, and integrated into a personal vision. The Midjourney problem is a perfect metaphor for the preset-scrolling musician: both are at risk of letting the tool’s aesthetic signature overwrite their own.

Key takeaways

- Sonic homogeneity arises from trend-driven workflows and over-reliance on presets, not from a lack of good tools.

- Building sounds from basic oscillators with “intentional friction” is the most direct path to developing a signature sound.

- The concept of “Timbral Voice Leading” applies compositional logic to the evolution of sound texture, breathing life into standard harmonic progressions.

Why Do Your AI-Assisted Artworks Look Like Everyone Else’s AI Art?

We’ve seen that the dilemma facing a composer with a library of powerful plugins is identical to that of a visual artist using an AI generator. The underlying challenge is not about the specific tool, but about developing a workflow that transcends the tool’s inherent biases. The ultimate goal is to move from being a sophisticated operator of a system to being a composer with an aesthetic signature. This final step is about synthesizing all the previous concepts into a universal creative methodology.

This methodology has three pillars. The first is Deconstruction: whether it’s a synth preset or an AI-generated image, you must have a process for breaking it down into its constituent parts to understand how it was made. The second is Intentionality: rebuilding that element from scratch, or heavily modifying it, with a clear compositional purpose in mind. You are not searching for a sound; you are building the sound that the music requires. The third is Integration: blending these custom-built elements with other materials, whether they are acoustic instruments or samples from different contexts, to create a hybrid sound world that is uniquely yours.

This approach fundamentally reframes your relationship with technology. Software and AI cease to be magic boxes that spit out finished products. They become what they should be: incredibly powerful sources of raw material and sophisticated tools for manipulation. Your artistry is expressed in the choices you make during this process of deconstruction and reconstruction. It’s in the subtle detuning of an oscillator, the precise curve of a filter envelope, and the dynamic space you leave in a mix. This is where your aesthetic signature is forged.

Begin today by choosing one synth preset you often use and apply this deconstructive workflow. Break it, reshape it, and transform it from a generic tool into a personal statement. This is the first step toward building a truly unique sonic identity.