The frustrating similarity in AI art stems not from a lack of prompt-craft, but from a failure to impose a unique artistic vision upon the machine.

- The default settings and popular models of dominant platforms naturally lead to an “aesthetic monoculture” where most outputs converge.

- True distinction is achieved by moving from tool operator to conceptual director—training models on your own curated data and worldview.

Recommendation: Stop refining your prompts and start building your own conceptual and data frameworks. This is the only durable path to an authentic AI-assisted practice.

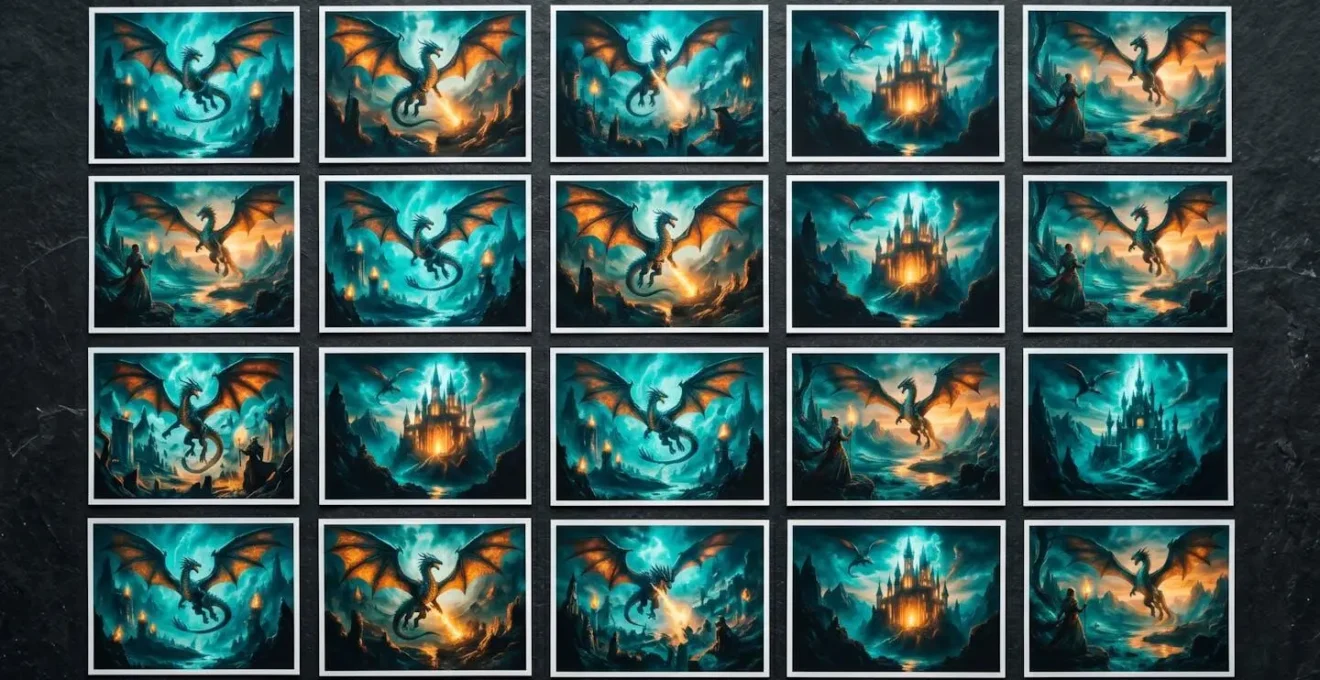

There’s a specific, sinking feeling familiar to every artist working with generative tools. You spend hours coaxing a vision from the latent space, refining prompts, and finally, the machine delivers a technically flawless, aesthetically pleasing image. But as you scroll through your portfolio—and the feeds of others—a quiet horror dawns: it all looks the same. The same ethereal glow, the same fantasy tropes, the same hyper-polished but soulless perfection. It’s the digital equivalent of every café playing the same chillhop playlist.

The common advice to “write better prompts” or “learn prompt engineering” fundamentally misses the point. It treats the problem as a syntax error, a failure to speak the machine’s language correctly. This is a trap. It keeps you locked in the role of a supplicant, asking the machine for its interpretation of your ideas. The resulting work will always be an echo of the tool’s biases and the visual diet it was fed, a vast dataset of existing internet imagery.

This guide argues for a radical shift in perspective. To escape the gravity of the generic, you must transcend the tool. The key is not to become a better prompter, but to become a more demanding artistic director. It requires moving beyond merely describing an image to actively cultivating a personal dataset, training the machine on your unique worldview, and making conscious, often difficult, choices about authorship and transparency. It’s about forging a signature, not just generating a picture.

This article will deconstruct the forces that push AI-assisted art towards homogeneity and provide a strategic framework for resisting them. We will explore practical methods for developing a unique visual language and navigate the complex conceptual and legal terrain that comes with a truly pioneering practice.

Summary: Forging a Unique Visual Signature in the Age of AI Art

- Why Does Every Midjourney User’s Portfolio Look Identical After 6 Months?

- How to Train Your Own LoRA Model for Recognisably Personal AI Imagery?

- Artist-Directed Prompting vs Emergent Curation: Which Produces More Interesting Results?

- The AI Training Dataset That Put a UK Artist in Legal Jeopardy

- When to Present AI Collaboration Openly vs Integrate It Invisibly into Practice?

- Why Does That Factory Pad Appear in 500 Other Tracks This Year?

- Why Do All Graduates from Your Programme Move the Same Way in Their First Commissions?

- Why Do Some Artists Fear AI While Others Embrace It as a Collaborator?

Why Does Every Midjourney User’s Portfolio Look Identical After 6 Months?

The phenomenon of aesthetic convergence isn’t an accident; it’s a systemic outcome. When a single platform like Midjourney captures over 26.8% of the global generative AI image market, its default aesthetic becomes an overwhelmingly dominant visual dialect. Millions of users, starting from the same base models and guided by the same popular tutorials, inevitably begin to produce work that clusters around a recognisable, platform-specific “house style.” This creates a powerful feedback loop: popular images inform future prompts, further reinforcing the dominant aesthetic.

This process results in what can only be described as an aesthetic monoculture. The novelty wears off quickly, replaced by a sense of Déjà vu. As the editorial team at iA Writer notes, the effect is palpable even to casual observers.

most AI images now look the same to you. They may still seem aesthetically appealing, somewhat, technically, at first, but they are still quickly recognizable as AI images.

– iA Writer editorial team, AI Art is The New Stock Image

This isn’t a failure of the user’s imagination so much as a consequence of the tool’s design. The path of least resistance—using default settings and simple prompts—leads directly into this visual echo chamber. Escaping requires a conscious and deliberate effort to push against the system’s gravitational pull, rejecting the easy, polished output in favour of something more personal and, often, less immediately perfect.

How to Train Your Own LoRA Model for Recognisably Personal AI Imagery?

If default models create an aesthetic monoculture, the most powerful act of resistance is to create your own. Training a Low-Rank Adaptation (LoRA) model is the first truly significant step from being a tool operator to becoming an artistic director. A LoRA allows you to inject your specific stylistic or conceptual DNA into a base model, effectively teaching the AI *your* visual language. It’s the difference between describing a face and showing the AI a folder of 100 family portraits to make it understand your specific idea of “face.”

The process is more accessible than ever and forces a level of artistic intentionality that simple prompting bypasses. You must curate, select, and prepare a dataset that embodies your vision. This act of curation is itself an artistic practice. A powerful example of this is seen in the work of UK-based artist Anna Ridler, whose methodology represents the pinnacle of what can be called conceptual training.

Case Study: Anna Ridler’s “Myriad (Tulips)” and Data as Artistic Medium

UK-based artist Anna Ridler created ‘Myriad (Tulips)’, a training dataset of 10,000 individually photographed and hand-labelled tulip images. Rather than scraping existing imagery, Ridler spent three months capturing unique tulips in Utrecht, manually categorizing each by color, size, and shape. This dataset was then used to train a GAN for her ‘Mosaic Virus’ installation, which generates AI tulips responsive to Bitcoin price fluctuations. Ridler’s approach exemplifies ‘conceptual training’—teaching the AI a personal thematic framework rather than mimicking existing styles. This methodology ensures legal compliance, ethical sourcing, and distinctive artistic output rooted in the artist’s own creative labor and vision.

While not every project requires three months of photography, Ridler’s process highlights the core principle: the quality and uniqueness of your training data directly determine the originality of your output. Following a structured approach is key to achieving a usable and personal model.

Your Action Plan: Training a Personal LoRA Model

- Gather Your Vision: Collect at least 15-30 high-quality training images that represent your unique conceptual or stylistic vision (512×512 pixels minimum for SD 1.5 models).

- Standardise and Diversify: Ensure images are in PNG or JPEG format and represent diverse angles, lighting, and contexts within your chosen theme.

- Automate Your Annotation: Upload images to your training platform and configure automatic captioning (e.g., Deepbooru), adjusting the threshold to around 0.6 for optimal tag accuracy.

- Claim Your Trigger: Define a unique trigger word to invoke your LoRA. Choose something distinctive that is unlikely to appear in the base model’s vocabulary.

- Choose a Foundation: Select a base model (e.g., Anything V5 for anime, RealisticVision for photorealism) that aligns with your intended final aesthetic.

- Monitor the Learning: Keep an eye on training loss values, aiming for 0.1-0.12 for realistic images, and save checkpoints every few epochs to test different stages.

- Test for Generalisation: Test your trained LoRA with a variety of prompts to see how well it applies its new knowledge to different contexts, refining if it overfits or underfits.

Artist-Directed Prompting vs Emergent Curation: Which Produces More Interesting Results?

Once an artist moves beyond default tools, a fundamental philosophical question arises: what is the ideal relationship with the machine? Should the artist be a meticulous director, specifying every detail through complex prompts? Or should they be a curator, generating a volume of chaotic outputs and sifting through them for moments of emergent, unexpected beauty? This debate sits at the heart of contemporary AI art practice. There is no single right answer, only a spectrum of collaborative styles.

Artist-Directed Prompting is about control. It involves crafting long, highly specific prompts with weighted terms, negative prompts, and precise commands to force the model toward a preconceived vision. This method is excellent for commercial work or projects where a specific outcome is required. However, it can also stifle the AI’s “creativity,” preventing the happy accidents and strange juxtapositions that often lead to the most compelling work. It risks turning the artist into a micromanager of a silicon intern.

Emergent Curation, conversely, is about surrender and discovery. It involves using shorter, more abstract prompts to see what the model produces, then curating the results. This approach embraces the AI as a true collaborator, a source of unpredictable ideas. It’s less about realizing a vision and more about discovering one. The danger here is a lack of direction, leading to a portfolio of interesting but disconnected images. While recent research on AI art practices shows that 20.7% of artists already emphasize this balance of co-creation, the most profound results often come from a hybrid approach: curating emergent results generated from a *personally trained model*. This combines the intentionality of a unique dataset with the unpredictability of the generative process, creating a space for genuine, artist-guided discovery.

The AI Training Dataset That Put a UK Artist in Legal Jeopardy

The promise of training a custom model on a unique dataset is the ultimate path to originality. However, it opens a legal and ethical minefield, particularly for artists based in the UK. The question of “what can I legally use for training data?” is one of the most pressing and uncertain issues in the creative industries today. An artist’s innocent attempt to build a better dataset by scraping images could inadvertently lead to significant legal risk and copyright infringement claims.

This isn’t a hypothetical fear. Concerns are widespread, with a 2024 survey by DACS revealing that 74% of artists worry their work is being used to train AI models without their consent. For artists building their own datasets, the tables are turned, and navigating the shifting legal landscape becomes paramount. The UK government’s own indecision on the matter highlights the complexity of the issue.

Case Study: The UK’s Evolving Stance on Copyright and AI Training

In December 2024, the UK government published proposals to reform how copyright-protected material can be used for AI training, leaning towards a ‘rights reservation’ model where creators must actively opt-out. This was a reversal from a widely criticised 2022 proposal to simply extend the text and data mining (TDM) exception to commercial use, which would have allowed widespread training on copyrighted works without permission. This back-and-forth, heavily influenced by lobbying from groups like the Creative Rights in AI Coalition, shows that the legal ground is still moving. For a UK artist, this means that sourcing training data from the open web is a high-risk activity, and relying on fully licensed, public domain, or self-generated data (like Anna Ridler) is the only truly safe harbour.

The key takeaway for any artist in the UK is to assume that no right to use copyrighted material for commercial AI training exists without explicit permission. The convenience of a web-scraped dataset is not worth the potential legal and financial fallout. Building an ethical and legally compliant dataset is not a constraint on creativity; it is a foundational requirement for a sustainable artistic practice.

When to Present AI Collaboration Openly vs Integrate It Invisibly into Practice?

Once an artist has developed a unique process, another critical decision emerges: how to frame the work publicly. Do you loudly proclaim “This is an AI collaboration,” making the process central to the concept? Or do you present the final image as an artwork in its own right, with the tools used remaining as background information, much like a photographer doesn’t always specify their camera and lens? This choice is heavily influenced by the persistent and challenging public perception of AI’s role in art. Shockingly, survey data reveals that 76% don’t think AI art qualifies as art. This statistic forms the backdrop against which every artist must make their decision.

Choosing to integrate AI invisibly is often a pragmatic response to this skepticism. An artist may feel the “AI” label distracts from the work’s conceptual or aesthetic merits, leading to tiresome debates about authenticity rather than engagement with the art itself. They want the work to be judged as an image, not as a technological artifact. This is a valid, defensive posture in a world not yet fully comfortable with computational creativity.

The alternative is a strategy of radical transparency, where the artist embraces the AI collaboration as a core part of the narrative. This approach often involves exhibiting the process, the code, or the datasets alongside the final output. London-based artist Anna Ridler is a leading proponent of this method, viewing transparency not as a confession, but as a conceptual necessity.

I’m not using AI to critique AI, but rather using AI to push what drawing can be into new realms. Part of doing this is by trying to use it with the body, by making it real and tangible and not just hidden away on a screen.

– Anna Ridler, as quoted on AIArtists.org

By making her datasets artworks in themselves, Ridler forces the viewer to confront the immense human labour, curation, and intention behind the machine’s output. This reframes the conversation from “Is it art?” to “How was this made, and what does it mean?” For artists looking to forge a unique signature, this transparent approach offers a powerful way to claim authorship over not just the final image, but the entire collaborative process.

Why Does That Factory Pad Appear in 500 Other Tracks This Year?

The problem of aesthetic monoculture is not unique to visual art; it has a direct parallel in music production. The title of this section refers to the phenomenon of a single popular synthesizer preset—a “factory pad”—becoming ubiquitous in countless tracks. It sounds good, it’s easy to use, and it quickly becomes a sonic cliché. This is precisely what happens with popular prompts, styles, and character models in generative AI. They are the “factory pads” of visual art.

The core issue is the seductive nature of high-quality defaults. When a tool offers a polished, professional-sounding (or -looking) result with minimal effort, the incentive to dig deeper and create something from scratch diminishes. Research into the evolution of design acknowledges this double-edged sword. As one study notes, the very tools that streamline creation can also enforce a stifling conformity.

Generative approaches such as DALL-E and Midjourney have advanced concept development while eliciting concerns around stylistic uniformity and authorship.

– Research study authors, The Impact of Artificial Intelligence on the Evolution of Graphic Design

The solution, for both musicians and visual artists, is the same: you must move beyond the presets. For a musician, this means learning synthesis to sculpt their own sounds. For an AI artist, it means learning to train their own models, manipulate parameters beyond the surface-level prompt, and integrate outputs into a broader workflow involving traditional digital tools like Photoshop or Procreate. The AI-generated image should be viewed as raw material—the equivalent of a single, unique instrument sound—not the finished composition. Resisting the factory preset is a foundational act of artistic identity.

Why Do All Graduates from Your Programme Move the Same Way in Their First Commissions?

Art schools and creative programmes have always faced the challenge of teaching foundational skills without producing a cohort of clones. The best programmes foster a signature style in their students; the worst instill a rigid institutional aesthetic. Today, the “programme” for many aspiring AI artists is not a university, but a loose curriculum of YouTube tutorials, Discord community trends, and popular prompt-sharing websites. This self-directed but highly conformist education is creating a new generation of artists who all “move the same way.”

They learn the same prompt formulas for achieving the “cinematic” look, the same negative prompts to eliminate imperfections, and the same trending artists to cite for style. While this is an effective way to quickly learn the tool’s mechanics, it’s a disastrous way to develop an artistic voice. The artist learns to replicate a process rather than develop a practice. They are learning a recipe, not how to cook. This shortcut to technical proficiency comes at the cost of conceptual depth and originality, a concern echoed in academic critiques of the technology’s impact.

Unregulated AI training on extensive datasets threatens to homogenise designs, undermining cultural significance. Ethical frameworks are essential to guarantee that AI augments rather than supplants human innovation.

– Study authors, The Impact of Artificial Intelligence on the Evolution of Graphic Design

Breaking this cycle requires a conscious decision to go off-piste. It means seeking out obscure techniques, deliberately breaking the “rules” taught in tutorials, and feeding the AI unconventional inputs. It means developing a personal “research and development” practice that is separate from producing finished images. Spend a week trying to generate only “ugly” or “unsettling” images. Train a model on your childhood drawings. Use the tool to do things it wasn’t designed for. You must actively unlearn the popular methods to discover your own.

Key Takeaways

- Monoculture is the Default: The market dominance and design of major AI platforms naturally create a generic “house style” that users must actively fight against.

- Your Data is Your Voice: True originality comes from training models on your own, unique datasets, turning the act of curation into an artistic practice in itself.

- Process is Political: Navigating UK copyright law and deciding on a strategy for transparency are not side-issues; they are central, defining acts of a mature AI-assisted practice.

Why Do Some Artists Fear AI While Others Embrace It as a Collaborator?

The discourse surrounding AI in the arts is sharply divided, often casting artists into two opposing camps: the fearful Luddites and the optimistic futurists. The fear is tangible and, in many ways, justified. It’s a fear of displacement, of devaluation, and of a future where creative skills honed over a lifetime are rendered obsolete by a machine. This anxiety is not just philosophical; it’s economic. With economic impact surveys showing that 55% of artists worry AI will hinder their income generation, the fear is rooted in a real concern for livelihood.

However, framing the debate as a simple binary of fear versus embrace is a gross oversimplification. The artists who are truly pushing the medium forward are neither blind optimists nor fearful reactionaries. They are critical collaborators. They embrace the tool not for what it is, but for what it could be when subverted, customised, and pushed to its limits. They see the AI not as a competitor, but as a profoundly powerful, endlessly strange, and occasionally frustrating studio assistant.

This “critical embrace” involves acknowledging the tool’s inherent flaws—its biases, its tendency toward cliché, its legal ambiguities—and making those flaws part of the work itself. It means choosing the difficult path of training a personal model over the easy path of a prompt. It means having the courage to be transparent about the process. It means shifting your identity from “one who makes images” to “one who designs systems that make images.” This is where the most exciting work is happening, at the intersection of artistic vision and computational logic.

The future of AI art will not be defined by the tools themselves, but by the artists who refuse to accept their limitations. It will be forged by those who see the aesthetic monoculture not as a destination, but as a starting point to rebel against. By moving beyond the prompt, you don’t just create a unique image; you assert your role as an artist in a world that increasingly questions it.

To truly innovate, you must stop asking the machine what it can do for you and start dictating what you will do with it. Begin building your unique dataset today.